MSU Defenses Video Quality Metrics Benchmark

Check your IQA/VQA metric robustness to adversarial attacks

Khaled Abud, Georgii Bychkov,

Ekaterina Shumitskaya, Vyacheslav Napadovsky

Anastasia Antsiferova, Sergey Lavrushkin

Key features of the Benchmark

- 20+ evaluated defense methods of different types (purification, adversarial training, certified robustness)

- 14 adversarial Wb and BB attacks including FGSM-based, Universal Adversarial Perturbation-based and Perceptual-aware attacks

- Automatic cloud-based pipeline for evaluation

What’s new

- 30.06.2024 Alpha-version of benchmark Benchmark Release

Dataset

Dataset can be found here.

Results

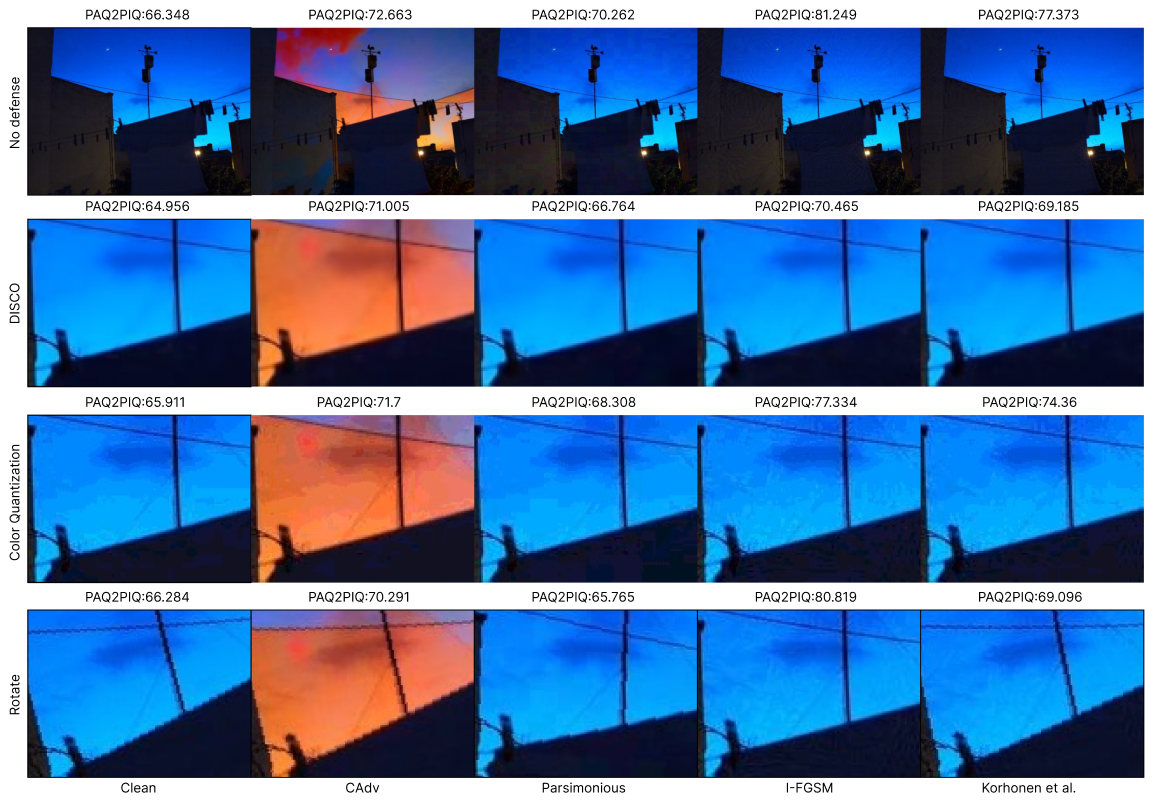

Non-adaptive leaderboard for adversarial purification defenses. Evaluated metrics are averaged across all images and attacks. Defense parameters’ values with the highest correlations for adversarial images are selected:

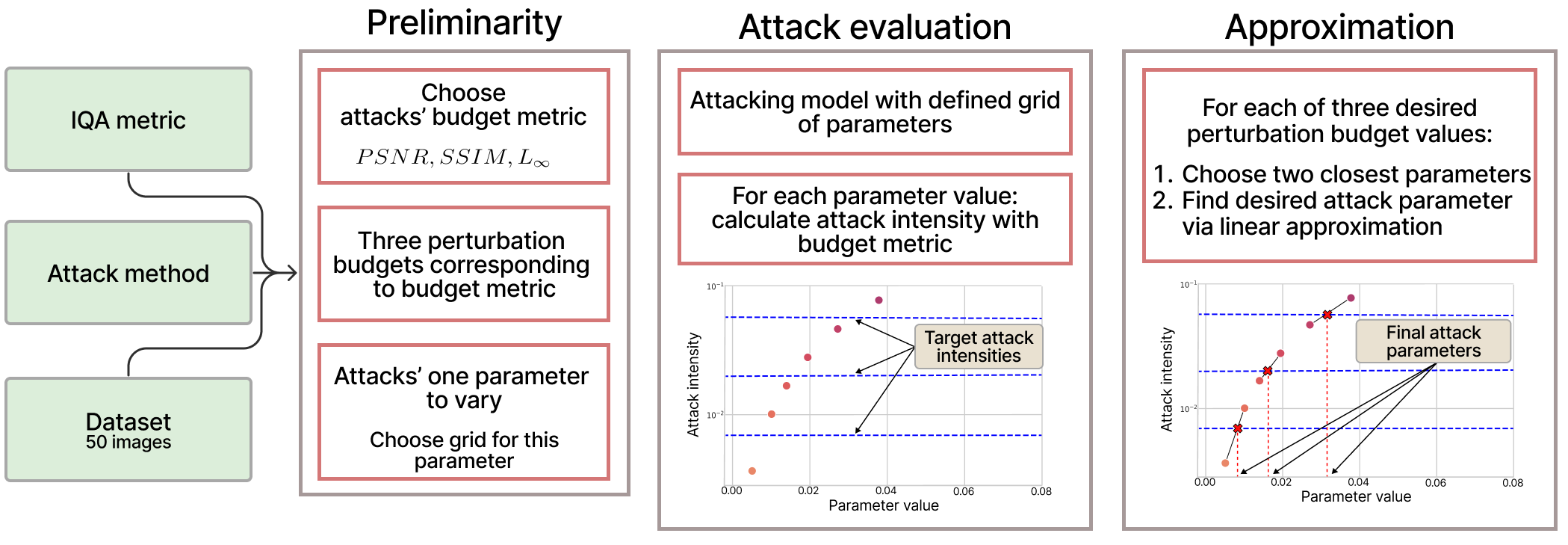

Methodology and dataset

Cite us

You can find the full text of our paper through the link and the supplementary material.

@article{guardians2024, title={Guardians of Image Quality: Benchmarking Defenses Against Adversarial Attacks on Image Quality Metrics}, author={Alexander Gushchin, Khaled Abud, Georgii Bychkov, Ekaterina Shumitskaya, Anna Chistyakova, Sergey Lavrushkin, Bader Rasheed, Kirill Malyshev, Dmitriy Vatolin, Anastasia Antsiferova}, journal={arXiv preprint arXiv:2408.01541}, year={2024} }

Contacts

We would highly appreciate any suggestions and ideas on how to improve our benchmark. Please contact us via email: vqa@videoprocessing.ai.

-

MSU Benchmark Collection

- Super-Resolution Quality Metrics Benchmark

- Video Colorization Benchmark

- Video Saliency Prediction Benchmark

- LEHA-CVQAD Video Quality Metrics Benchmark

- Learning-Based Image Compression Benchmark

- Super-Resolution for Video Compression Benchmark

- Defenses for Image Quality Metrics Benchmark

- Deinterlacer Benchmark

- Metrics Robustness Benchmark

- Video Upscalers Benchmark

- Video Deblurring Benchmark

- Video Frame Interpolation Benchmark

- HDR Video Reconstruction Benchmark

- No-Reference Video Quality Metrics Benchmark

- Full-Reference Video Quality Metrics Benchmark

- Video Alignment and Retrieval Benchmark

- Mobile Video Codecs Benchmark

- Video Super-Resolution Benchmark

- Shot Boundary Detection Benchmark

- The VideoMatting Project

- Video Completion

- Codecs Comparisons & Optimization

- VQMT

- MSU Datasets Collection

- Metrics Research

- Video Quality Measurement Tool 3D

- Video Filters

- Other Projects