MSU HDR Video Reconstruction Benchmark: HLG

The most comprehensive comparison of HDR video reconstruction methods

Nikolay Safonov

Key features of the Benchmark

- Comparison of 14 methods of HDR video reconstruction

- A new private dataset for testing. 20 different scenes: fireworks, flowers, soccer and others

- 10 metrics for restoration quality assessment

- HDR video player for self-assessment of quality

- Subjective comparison

- Split comparison of video restoration with two most popular gamma curves: PQ (soon), HLG

What’s new

- 01.08.2024 Our paper “A New HDR Video Reconstruction Benchmark, Dataset and Metric” was published.

- 28.12.2023 Our paper “A New HDR Video Reconstruction Benchmark, Dataset and Metric” was accepted to ICDSP’2024.

- 10.05.2022 Benchmark Release!

Leaderboard

Click on the labels to sort the table.

In the methodology you can read brief information

about all metrics and subjective study.

You can scroll the table to see all the results.

| Rank | Model | Subjective | HDR-VDP-3 | HDR-VQM | HDR-PSNR | HDR-SSIM | PQ-NIQE | PQ-PSNR | PQ-SSIM | PQ-VMAF | Shifted HDR-PSNR |

|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | GT | 4.9064 | 10.0000 | 0.0000 | inf | 1.0000 | 5.6412 | inf | 1.0000 | 100.0000 | inf |

| 2 | DeepHDR | 3.7604 | 8.0572 | 0.1000 | 33.5266 | 0.9907 | 5.0832 | 21.9155 | 0.9584 | 89.5382 | 37.0040 |

| 3 | Maxon | 3.6044 | 8.2153 | 0.0622 | 45.6478 | 0.9949 | 4.9400 | 35.1188 | 0.9846 | 89.9478 | 45.3267 |

| 4 | HDRCNN | 3.3537 | 6.3880 | 0.1919 | 33.0200 | 0.9663 | 4.9391 | 21.2604 | 0.8539 | 50.5080 | 35.0804 |

| 5 | SingleHDR | 3.2459 | 8.4180 | 0.2630 | 34.2871 | 0.9845 | 5.5764 | 25.8042 | 0.9606 | 70.6792 | 42.5944 |

| 6 | HDRTV | 2.7886 | 6.9813 | 0.1296 | 35.9721 | 0.9918 | 5.4744 | 22.4636 | 0.9555 | 85.6109 | 26.8880 |

| 7 | KovOliv | 2.3654 | 7.4724 | 0.1329 | 32.4223 | 0.9937 | 4.8269 | 20.1295 | 0.9389 | 91.3118 | 28.9170 |

| 8 | Huo | 1.7939 | 8.9004 | 0.2103 | 30.0900 | 0.9720 | 4.9126 | 23.3886 | 0.9527 | 82.4653 | 44.4314 |

| 9 | HDRUnet | 1.3770 | 6.6857 | 0.1830 | 34.9894 | 0.9845 | 6.7712 | 24.7347 | 0.9388 | 92.1209 | TBP* |

| 10 | ExpNet | 1.0268 | 7.5910 | 0.1942 | 34.0555 | 0.9892 | 5.2161 | 24.2994 | 0.9321 | 61.6722 | 40.8574 |

| 11 | KUnet | 0.6530 | 6.1543 | 0.2126 | 32.5082 | 0.9838 | 7.3623 | 24.1380 | 0.9236 | 77.6478 | TBP* |

| 12 | twostageHDR | 0.4650 | 6.6004 | 0.1350 | 31.6717 | 0.9864 | 7.7687 | 22.4022 | 0.9139 | 46.1830 | TBP* |

| 13 | Kuo | TBP* | 8.6776 | 0.0579 | 40.7642 | 0.9954 | 5.0287 | 34.9679 | 0.9862 | 94.0270 | 47.4612 |

| 14 | HuoPhys | TBP* | 7.0618 | 0.1518 | 32.6570 | 0.9946 | 5.0754 | 18.7458 | 0.9383 | 90.0419 | 22.1553 |

| 15 | Akyuz | TBP* | 6.4114 | 0.2521 | 28.1420 | 0.9917 | 4.7872 | 14.6789 | 0.8535 | 71.5814 | 23.3069 |

| 16 | FHDR | TBP* | 8.7709 | 0.0107 | 40.4126 | 0.9960 | 5.0478 | 28.5834 | 0.9793 | 96.3042 | TBP* |

| 18 | IntrinsicHDR | TBP* | 7.8860 | 0.1319 | 34.4380 | 0.9828 | 6.100 | 25.2274 | 0.9462 | 81.1912 | TBP* |

| 19 | ITM25 | TBP* | 7.9392 | 0.1124 | 33.2922 | 0.9819 | 6.3479 | 23.7448 | 0.9363 | 74.2757 | TBP* |

| 20 | ITMLUT | TBP* | 8.7414 | 0.0231 | 36.711 | 0.9946 | 5.4742 | 24.7033 | 0.9717 | 90.1506 | TBP* |

| 21 | singhdr-torch | TBP* | 7.7930 | 0.0654 | 31.2827 | 0.9900 | 5.7695 | 21.9822 | 0.9320 | 78.3941 | TBP* |

TBP* – to be published

Charts

In this section you can observe the values of different metrics for each individual video and the average values. In the "Video" selector, you can observe the names of the videos. For your convenience, the Dataset tab has a preview of each video and its full name match.

Metric: Video:

Results

In this section, you can quickly evaluate the quality of the algorithms yourself. The video player below show you the HDR video if your device supports this technology. We recommend opening this video via Google Chrome or Safari.

Method:

Visualization

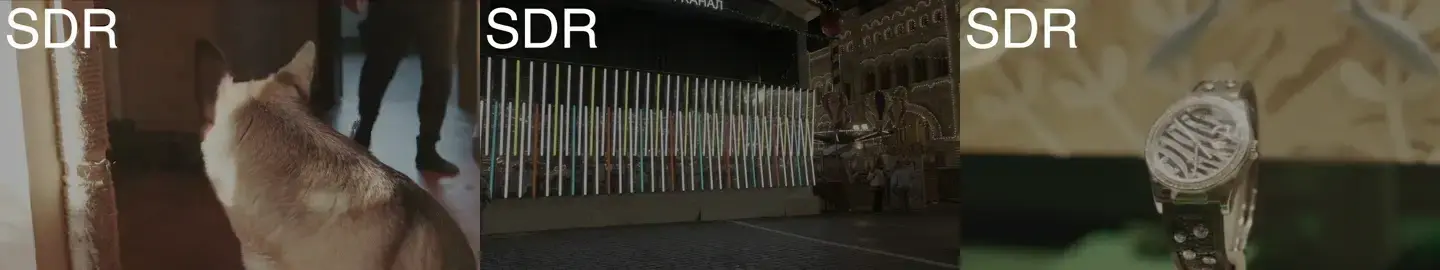

This section presents a visualization of the result of each of the algorithms.

- The first line is a preview

- The second line is a tonemapped crop

- The third and fourth lines are the two exposures, which show in detail the differences in the dark and bright areas

Please note that we do not show GT exposures, as this information makes it possible to obtain the HDR version of the GT video.

Video:

Model 1: Model 2: Model 3:

Drag a red rectangle in the area, which you want to crop.

GT

GT

HDRTVNet

HDRCNN

Your method submission

Verify the restoration ability of your HDR Video Reconstruction algorithm and compare it with state-of-the-art solutions. You can see information about all other participants here.

Cite us

|

@inproceedings{10.1145/3653876.3653896,

author = {Voronin, Mikhail and Safonov, Nickolay and Vatolin, Dmitriy},

title = {A New HDR Video Reconstruction Benchmark, Dataset and Metric},

year = {2024},

isbn = {9798400709029},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

url = {https://doi.org/10.1145/3653876.3653896},

doi = {10.1145/3653876.3653896},

abstract = {This paper discusses the challenges of evaluating SDR-to-HDR methods. The lack of comprehensive comparisons between existing methods prompted us to independently evaluate 14 HDR reconstruction approaches. To achieve this, we created a new closed HDR dataset. The evaluation involves objective quality metrics, such as PSNR, SSIM, MS-SSIM and NIQE adapted for HDR content. The study presents the first publicly available subjective scores for HDR reconstruction methods. The perceived quality of the reconstructed HDR videos is assessed by conducting a pairwise comparison. The research shows the strengths and weaknesses of each method, offering valuable insights for HDR video reconstruction and highlighting the need for improved quality assessment metrics in this domain. In addition, we introduce a novel quality evaluation metric, which leverages machine learning techniques to measure the effectiveness of HDR reconstruction methods.},

booktitle = {Proceedings of the 2024 8th International Conference on Digital Signal Processing},

pages = {27–32},

numpages = {6},

keywords = {hdr reconstruction, inverse-tone-mapping, video quality assessment},

location = {Hangzhou, China},

series = {ICDSP '24}

}

|

You can find the text of our paper through the link.

|

1. Download input data

|

Download SDR videos |

|

| 2. Apply your algorithm |

Convert SDR videos to HDR using your algorithm. You can also send us the code of your method or the executable file and we will run it ourselves. |

|

| 3. Send us result |

Send us an email to itm-benchmark@videoprocessing.ai

with the following information:

|

|

You can verify the results of current participants or estimate the performance of your method on public samples of our dataset (clips "Dog" and "Rolex"). Send us an email with a request to share them with you.

Contacts

We would highly appreciate any suggestions and ideas on how to improve our benchmark. For questions and propositions, please contact us: itm-benchmark@videoprocessing.ai

Also you can subscribe to updates on our benchmark:

MSU Video Quality Measurement Tool

Widest Range of Metrics & Formats

- Modern & Classical Metrics SSIM, MS-SSIM, PSNR, VMAF and 10+ more

- Non-reference analysis & video characteristics

Blurring, Blocking, Noise, Scene change detection, NIQE and more

Fastest Video Quality Measurement

- GPU support

Up to 11.7x faster calculation of metrics with GPU - Real-time measure

- Unlimited file size

Main MSU VQMT page on compression.ru

-

MSU Benchmark Collection

- Super-Resolution Quality Metrics Benchmark

- Video Colorization Benchmark

- Video Saliency Prediction Benchmark

- LEHA-CVQAD Video Quality Metrics Benchmark

- Learning-Based Image Compression Benchmark

- Super-Resolution for Video Compression Benchmark

- Defenses for Image Quality Metrics Benchmark

- Deinterlacer Benchmark

- Metrics Robustness Benchmark

- Video Upscalers Benchmark

- Video Deblurring Benchmark

- Video Frame Interpolation Benchmark

- HDR Video Reconstruction Benchmark

- No-Reference Video Quality Metrics Benchmark

- Full-Reference Video Quality Metrics Benchmark

- Video Alignment and Retrieval Benchmark

- Mobile Video Codecs Benchmark

- Video Super-Resolution Benchmark

- Shot Boundary Detection Benchmark

- The VideoMatting Project

- Video Completion

- Codecs Comparisons & Optimization

- VQMT

- MSU Datasets Collection

- Metrics Research

- Video Quality Measurement Tool 3D

- Video Filters

- Other Projects