Subjective QRS Benchmark

Explore subjective comparisons of the video distortion algorithms

Everyone is welcome to participate! Run your favorite Deblurring/Denoising/Super-Resolution/Compression method on our dataset and send us the result to see how well it performs. Check the “Submitting” section to learn the details.

What’s New

- March 2nd, 2026: Release

Introduction

Subjective QRS Benchmark provides large-scale human preference rankings for video restoration and compression algorithms. We start from a large pool of openly licensed video segments and select a diverse subset of 1,000 source clips via feature-based clustering.

Each source clip is then processed by multiple algorithm families:

- Deblurring

- Denoising

- Super-Resolution

- Compression

Algorithms results form the benchmark’s pool of distorted videos.

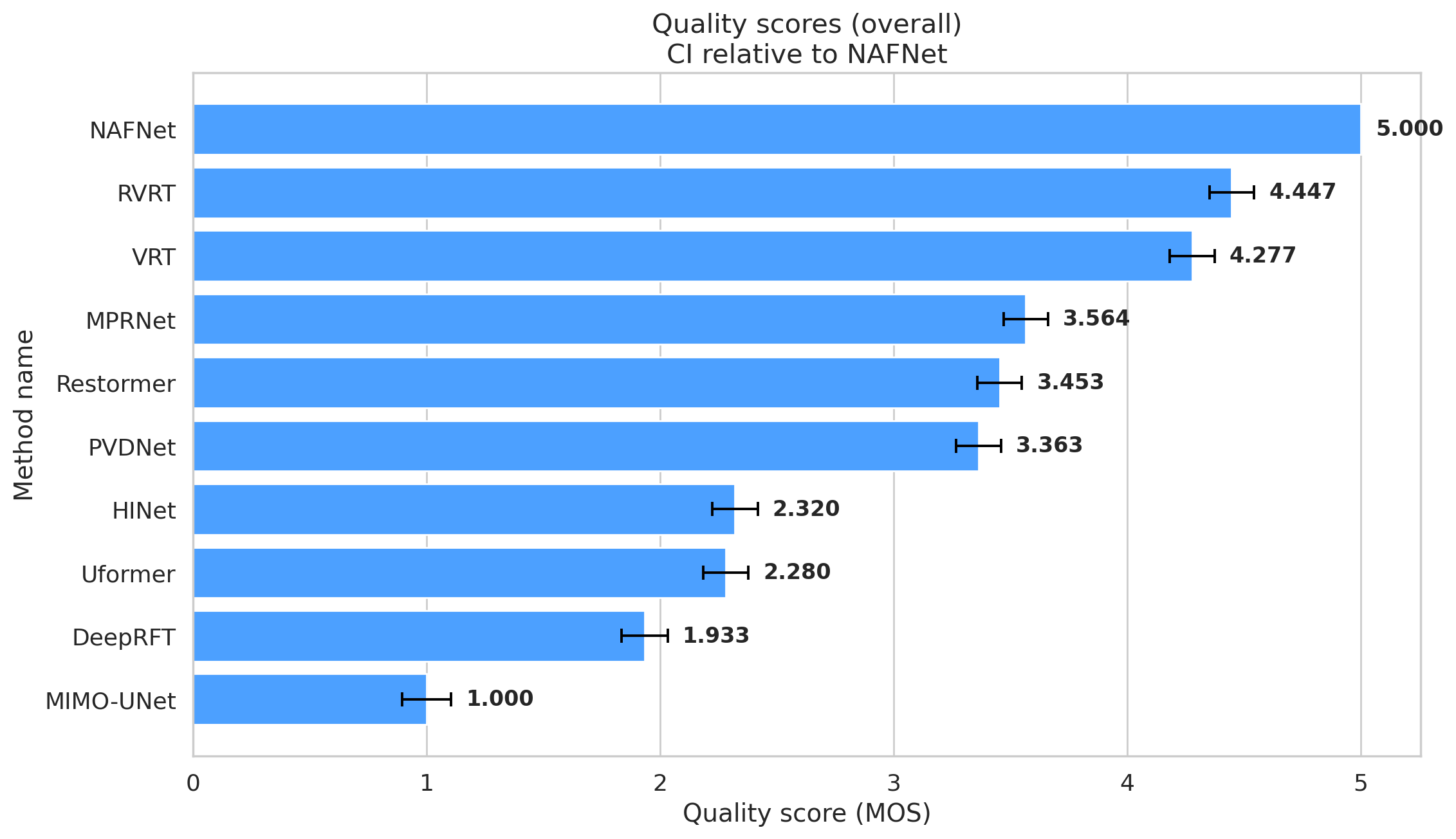

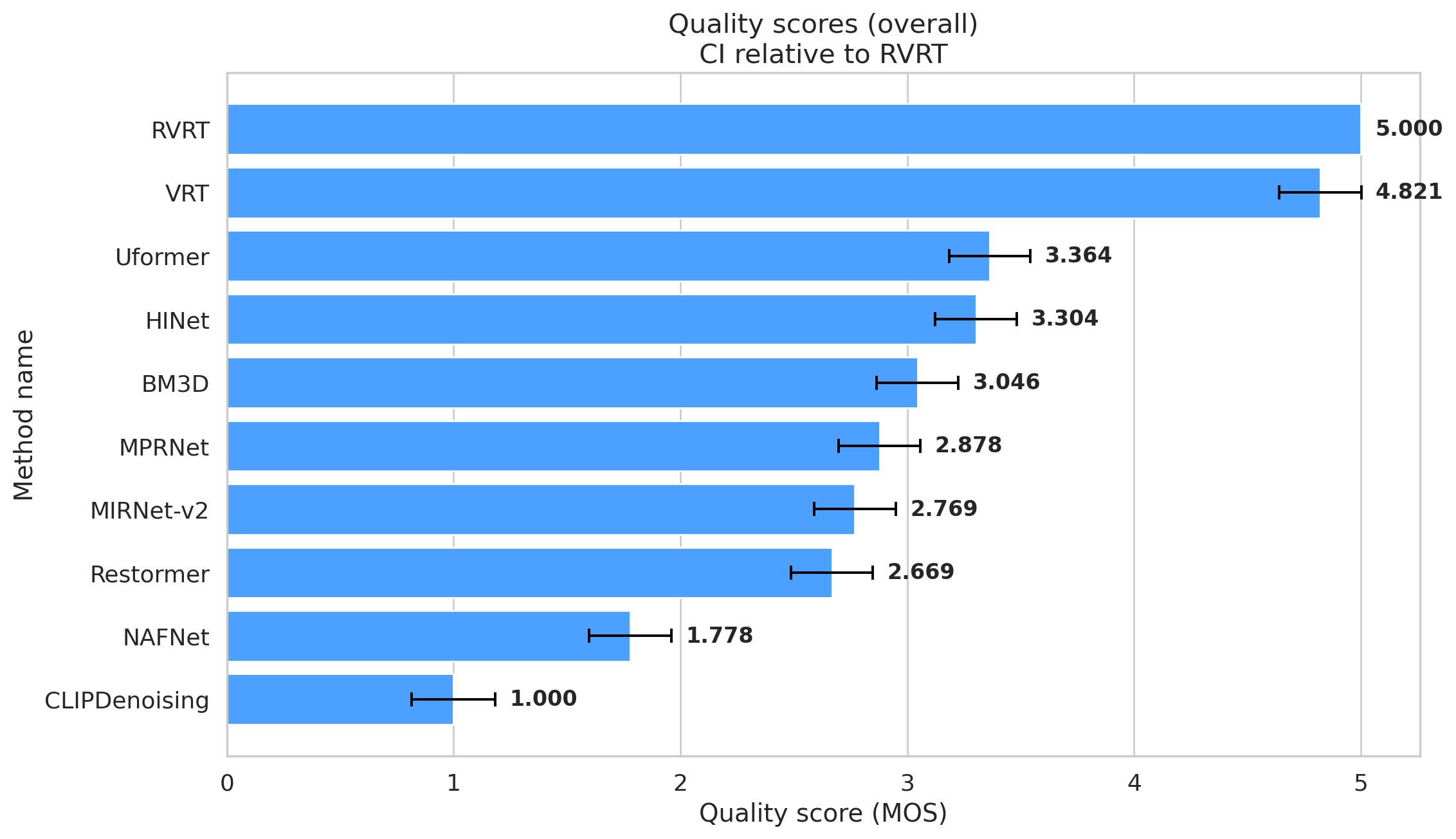

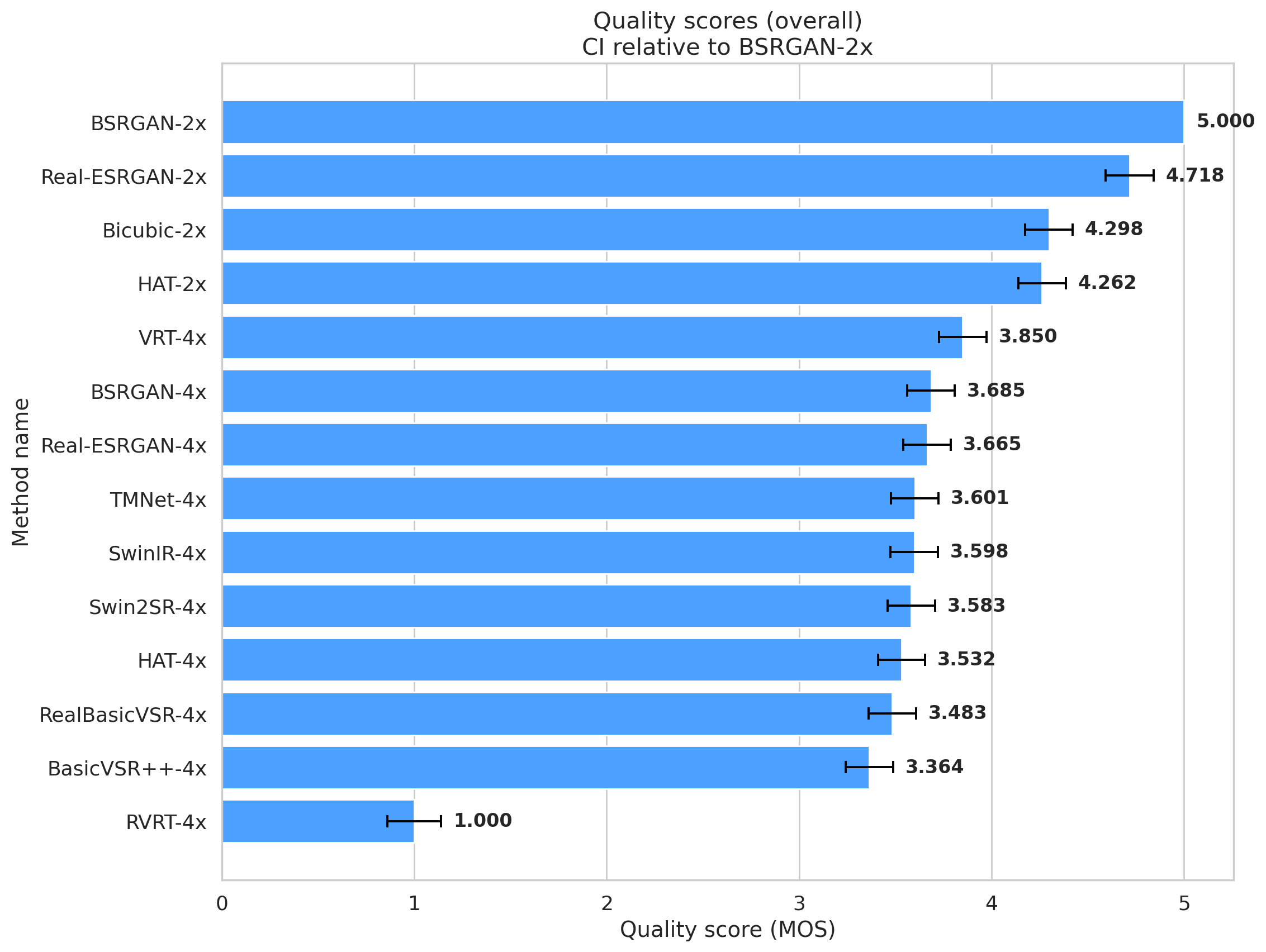

A large crowdsourcing study is conducted on these outputs using pairwise subjective comparisons, and the collected votes are aggregated into the task-specific rankings shown below. Alongside subjective scores.

Scroll below for comparison tables of model results.

Deblurring Leaderboard

Denoising Leaderboard

Super-Resolution Leaderboard

Compression Leaderboard

Submitting

To add your method to the benchmark, follow these steps:

| 1. Download the dataset |

|

2. Apply your method to the dataset |

3. Send to subjective-qrs-benchmark@videoprocessing.ai

|

If you have any suggestions or questions, please contact us: subjective-qrs-benchmark-benchmark@videoprocessing.ai

Get Notifications About the Updates of This Benchmark

Do you want to be the first to discover the best new Deblurring/Denoising/Super-Resolution/Compression algorithm? We can notify you about this benchmark’s updates: simply submit your preferred email address using the form below. We promise not to send you unrelated information.

Further Reading

Check the “Methodology” section to learn how we prepare our dataset.

Check the “Participants” section to learn which method implementations we use.

Crowd-sourced subjective

quality evaluation platform

- Conduct comparison of video codecs and/or encoding parameters

What is it?

Subjectify.us is a web platform for conducting fast crowd-sourced subjective comparisons.

The service is designed for the comparison of images, video, and sound processing methods.

Main features

- Pairwise comparison

- Detailed report

- Providing all of the raw data

- Filtering out answers from cheating respondents

Subjectify.us

MSU Video Quality Measurement Tool

Widest Range of Metrics & Formats

- Modern & Classical Metrics SSIM, MS-SSIM, PSNR, VMAF and 10+ more

- Non-reference analysis & video characteristics

Blurring, Blocking, Noise, Scene change detection, NIQE and more

Fastest Video Quality Measurement

- GPU support

Up to 11.7x faster calculation of metrics with GPU - Real-time measure

- Unlimited file size

Main MSU VQMT page on compression.ru

-

MSU Benchmark Collection

- Super-Resolution Quality Metrics Benchmark

- Video Colorization Benchmark

- Video Saliency Prediction Benchmark

- LEHA-CVQAD Video Quality Metrics Benchmark

- Learning-Based Image Compression Benchmark

- Super-Resolution for Video Compression Benchmark

- Defenses for Image Quality Metrics Benchmark

- Deinterlacer Benchmark

- Metrics Robustness Benchmark

- Video Upscalers Benchmark

- Video Deblurring Benchmark

- Video Frame Interpolation Benchmark

- HDR Video Reconstruction Benchmark

- No-Reference Video Quality Metrics Benchmark

- Full-Reference Video Quality Metrics Benchmark

- Video Alignment and Retrieval Benchmark

- Mobile Video Codecs Benchmark

- Video Super-Resolution Benchmark

- Shot Boundary Detection Benchmark

- The VideoMatting Project

- Video Completion

- Codecs Comparisons & Optimization

- VQMT

- MSU Datasets Collection

- Metrics Research

- Video Quality Measurement Tool 3D

- Video Filters

- Other Projects