MSU Subjective Comparison of Modern Video Codecs

- Project head: Dr. Dmitriy Vatolin

- Organization of assessment: Alexander Parshin, Petrov Oleg

- Refinement, translation: Oleg Petrov

- Verification: Alexander Parshin, Artem Titarenko

About comparison

Tested codecs:

- DivX 6.0

- Xvid 1.1.0

- x264

- WMV 9.0

Bitrates:

- 690 kbps

- 1024 kbps

Number of sequences: 4

Number of experts: 50

Download MSU Subjective Comparison of Modern Video Codecs (32 pages in PDF, 1.38 MB)

Goal of subjective comparison

During last few years many comparisons of video, audio and image codecs were carried out by our Graphics & Media Lab at Moscow State University. All of them used objective metrics like PSNR, VQM or SSIM. This fact has raised reasonable questions on adequacy of objective measures to the subjectively perceived quality, which is the main parameter of a codec’s performance. Goals of our assessment are subjective comparison of new versions of popular videocodecs, comparison of results with objective metrics and subjective assessment technology testing.

Main comparison parts:

- Subjective comparison of videocodecs.

- Comparison of results with objective metrics.

Subjective comparison

Main quality parameter for a codec - subjective impressions of a viewer

of a compressed video. Latter is the main idea of subjective comparison

methods - quality score for a compressed sequence is the average opinion

of a group of experts on its quality (MOS, Mean Opinion Score).

There are lots of methodologies of subjective assessment, many of them

are implemented in MSU Perceptual Video Quality

tool

that was used for the assessment. Our comparison is using SAMVIQ -

method that was recently developed by EBU (European Broadcasting Union),

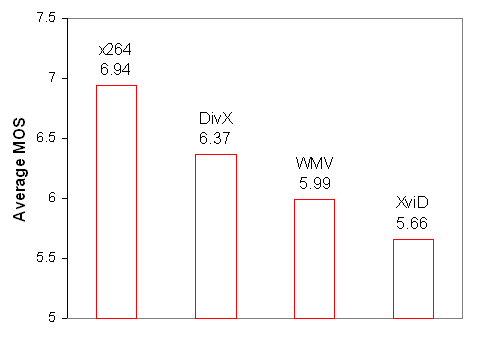

PDF with the comparison contains its description. On a graph below you

can see average MOS for all codecs, the higher the better. Probably,

XviD results could be improved by switching on deblocking algorithm

(this algorithm isn’t used by default).

Average MOS for all codecs |

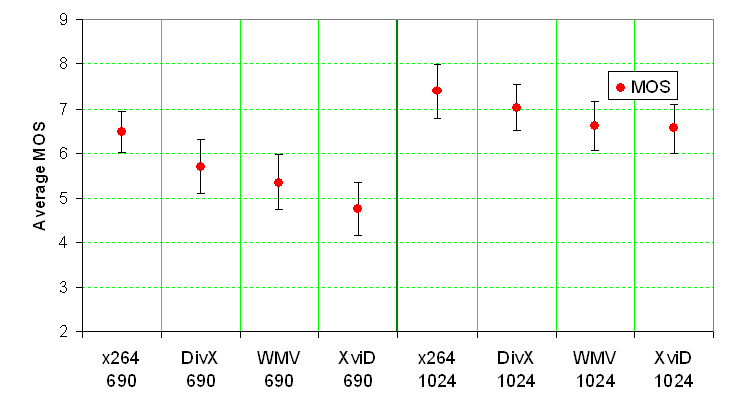

Following graph illustrates average MOS for each codec and bitrate and its’ 95% confidence intervals.

Average MOS for all codecs and bitrates |

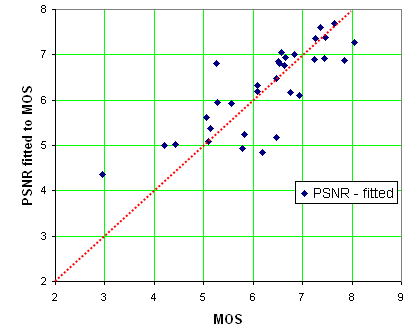

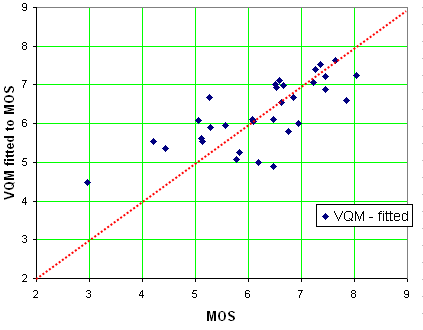

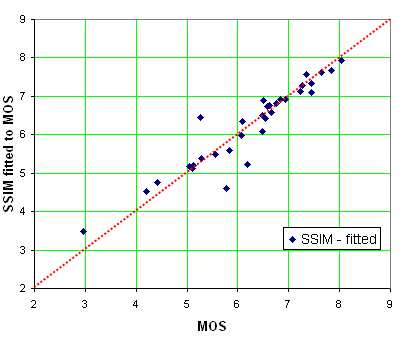

Evaluation of objective metrics

PSNR, VQM and SSIM were measured for each sequence with MSU Video Quality Measurement tool, description of these metrics can be found on this page. Note, that “VQM” metric refers to algorithm of Feng Xiao, not proposed by VQEG objective quality metric. The following graphs illustrate relation between subjective opinion and an objective metric: if set of points is close to a straight line, then the metric is adequate to the subjectively perceived quality (values of PSNR and SSIM were mapped on MOS scale, see PDF with the comparison for more details).

|

|

On our test set SSIM was far more adequate to the subjective opinion than PSNR and VQM |

|

Download

- MSU Subjective Comparison of Modern Video Codecs - PDF (1.38 MB)

- MSU Subjective Comparison of Modern Video Codecs - ZIP (1.2 MB)

Contacts

-

MSU Benchmark Collection

- Super-Resolution Quality Metrics Benchmark

- Video Colorization Benchmark

- Video Saliency Prediction Benchmark

- LEHA-CVQAD Video Quality Metrics Benchmark

- Learning-Based Image Compression Benchmark

- Super-Resolution for Video Compression Benchmark

- Defenses for Image Quality Metrics Benchmark

- Deinterlacer Benchmark

- Metrics Robustness Benchmark

- Video Upscalers Benchmark

- Video Deblurring Benchmark

- Video Frame Interpolation Benchmark

- HDR Video Reconstruction Benchmark

- No-Reference Video Quality Metrics Benchmark

- Full-Reference Video Quality Metrics Benchmark

- Video Alignment and Retrieval Benchmark

- Mobile Video Codecs Benchmark

- Video Super-Resolution Benchmark

- Shot Boundary Detection Benchmark

- The VideoMatting Project

- Video Completion

- Codecs Comparisons & Optimization

- VQMT

- MSU Datasets Collection

- Metrics Research

- Video Quality Measurement Tool 3D

- Video Filters

- Other Projects